The AI infrastructure build is accelerating beyond historical benchmarks

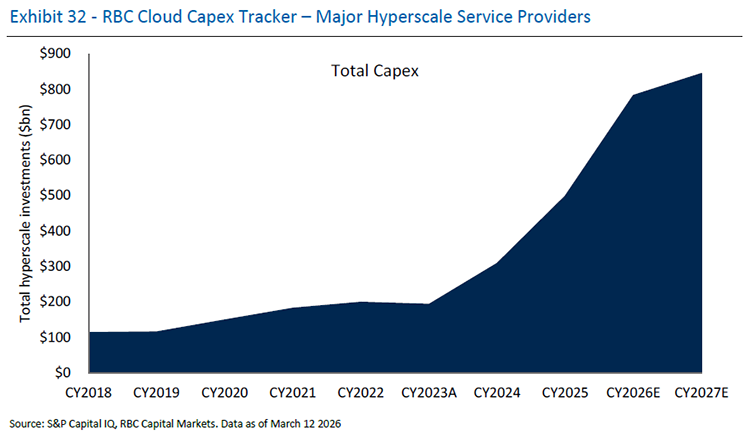

The race to build artificial intelligence capacity is reshaping industrial equipment markets in ways that extend far beyond the technology sector itself. Hyperscale providers — the major cloud and technology operators — are in the midst of an unprecedented buildout cycle, with capital expenditure projected to grow approximately 71 percent in 2026 alone, building on roughly 60 percent growth annually in both 2024 and 2025. These are not temporary spikes. Corporate commentary from large technology companies reveals a fundamental shift in capital allocation priorities, with executives consistently emphasizing that datacenter infrastructure capacity is now central to competitive positioning in AI.

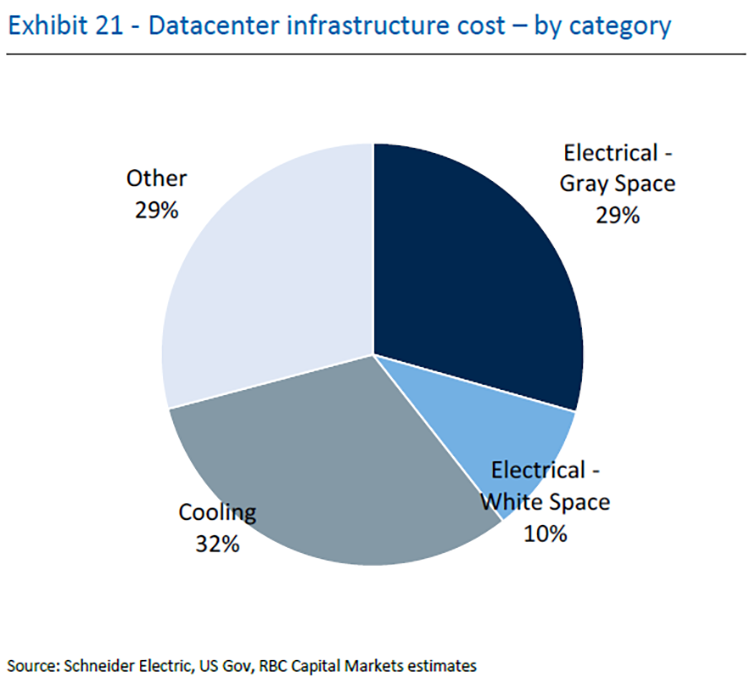

This buildout translates into enormous demand for equipment that most investors have historically overlooked. The physical construction of datacenters (power systems, cooling infrastructure, transformers, switchgears, and countless other components) represents a substantial portion of total facility cost. Analysis of a hypothetical 400-megawatt datacenter reveals a total project cost of approximately $11 billion, of which 29 percent relates to electrical and cooling equipment within the scope of industrial suppliers. For equipment providers, this translates into an estimated annual addressable market of approximately $220 billion over the next five years, more than triple the $60 billion estimate from just two years ago.

This market expansion reflects both the accelerating pace of datacenter deployment and the changing nature of that deployment. Where datacenters previously grew at a steady, predictable pace, today's growth is being pulled forward by the competitive urgency around artificial intelligence. Consensus expectations now envision datacenter capacity expanding from approximately 103 gigawatts today to 200 gigawatts by 2030 driven principally by AI infrastructure requirements.

Exhibit 21 is titled "Datacenter infrastructure cost - by category" and presents a four-segment pie chart illustrating the proportional cost composition of datacenter infrastructure. The largest segment is Cooling at 32%, represented in medium gray. Electrical – Gray Space accounts for 29%, shown in dark navy blue. Other costs also represent 29%, displayed in light gray/blue. The smallest segment is Electrical – White Space at 10%, shown in a lighter blue tone. Together, the two electrical categories total 39% of infrastructure costs, while cooling and other costs account for the remaining 61%. Source: Schneider Electric, US Gov, RBC Capital Markets estimates.

Why datacenter architecture is changing

The primary driver of architectural change is not environmental concern or theoretical efficiency gains, but rather a practical constraint: power density is increasing faster than existing systems can accommodate. A decade ago, a representative datacenter rack consumed perhaps 10 kilowatts of power. Today, the global average has doubled to approximately 17 kilowatts per rack. But artificial intelligence workloads operate in a different dimension entirely. Current advanced AI systems support configurations consuming 120-150 kilowatts per rack, with industry expectations pointing toward 1-megawatt-plus rack densities by 2027.

This escalation creates cascading technical problems. Supporting increasingly dense racks requires proportionally more ancillary components such as power shelves, conversion equipment, and cooling apparatus. These auxiliary systems occupy physical space within the rack cabinets, competing for real estate with the computing equipment that generates revenue. Industry analysis suggests that powering a megawatt-scale rack might require 1.5 racks-worth of power shelves alone, before accounting for other necessary components. This space constraint forces a fundamental rethinking of facility architecture.

"We estimate that 80-85% of critical power is actually used by the computing equipment in today's datacenters, given losses. In contrast, through fewer conversion steps a DC-based design can be ~95% efficient."

Mark Fielding, Head of European Industrials Research, RBC Capital Markets

The physical limitations extend to electrical infrastructure itself. Electrical cables have maximum current ratings, beyond which they become inefficient or risk failure. Accommodating the power demands of next-generation AI would require substantially thicker cables using significantly more copper and creating installation and thermal management challenges. The International Copper Association estimates that datacenter copper consumption alone will reach approximately 725,000 tons annually by 2030, representing 2.5 percent of global copper consumption — a staggering figure that underscores the scale of the infrastructure required.

Beyond spatial constraints, facility operators face efficiency challenges that ripple through the electrical grid. Today's datacenters operate on 415-480 volt alternating current systems that convert power multiple times before reaching computing equipment. Each conversion step introduces losses. The uninterruptible power supply — a critical component that provides instantaneous backup power during outages — operates at only 92-96 percent efficiency because it converts alternating current to direct current and then back to alternating current. Across the entire facility, only 80-85 percent of input power actually reaches computing equipment; the remainder dissipates as heat or is lost in conversion processes. From a grid stability perspective, datacenter power consumption fluctuates dramatically, with individual graphics processors potentially changing between 10 and 50 percent of maximum power consumption within fractions of a second, creating grid stability challenges for utilities.

The proposed solutions: 800VDC and beyond

The industry response involves shifting to higher voltages and direct current systems. New architectural approaches offer varying tradeoffs. An 800-volt direct current system can carry approximately three times the power of current-generation alternating current cabling for a given cable thickness, while reducing overall copper requirements by 40-70 percent. This represents a meaningful shift in both capital intensity and environmental impact.

Moving to direct current architectures reduces conversion losses significantly. A well-designed direct current system can achieve approximately 95 percent efficiency, compared to 80-85 percent for current alternating current facilities. This translates not just into reduced electricity costs (though electricity represents only approximately 10 percent of total cost of ownership) but also into meaningful reductions in electricity availability constraints. In regions where power infrastructure is stretched, the ability to require less input power for the same computing capacity becomes strategically valuable.

Industry consensus points toward a phased adoption timeline. The earliest commercially available approach, the "side-car" architecture, requires minimal facility modifications and allows retrofitting of existing structures. This approach may be available commercially by late 2027 or 2028. More sophisticated hybrid designs that more completely integrate direct current systems are expected to emerge by 2027-2028, while the most elegant solution — direct-to-chip power delivery with solid-state transformers that can convert from grid-voltage alternating current directly to 800-volt direct current — remains a post-2030 proposition.

Component disruption: Real but manageable

The shift toward higher-voltage direct current architectures will not affect all components equally. Analysis of facility infrastructure costs reveals distinct exposure profiles. The uninterruptible power supply, which represents approximately 16 percent of datacenter infrastructure costs, faces the most significant disruption risk. In 800-volt direct current designs, particularly those incorporating battery energy storage systems, the traditional UPS function may be replicated by rack-level battery storage or facility-scale battery systems, potentially displacing traditional UPS equipment entirely.

Additional components face more modest pressure. Medium-voltage transformers represent only 2 percent of infrastructure costs but are at risk of displacement by more flexible power electronics alternatives. Distribution switchgears (11 percent of costs) would be fewer in number in a higher-voltage system, though with higher individual unit value. Power distribution units and conventional cable management systems are at risk of gradual displacement as new power architectures become standard.

"Not every datacenter will run on 800V DC. Industry estimates are for only 15-25% of datacenters to be 800V-equipped by 2030."

Mark Fielding, Head of European Industrials Research, RBC Capital Markets

Yet the disruption picture is materially constrained by adoption timelines. Industry estimates suggest that only approximately 15-25 percent of datacenters will operate on 800-volt direct current by 2030, with the vast majority likely adopting the "side-car" approach that adds complexity incrementally rather than fundamentally restructuring existing designs. This gradual transition timeline provides incumbent equipment suppliers time to develop new offerings and reposition portfolios.

Moreover, the architectural transition introduces new component opportunities that offset traditional product displacement. Higher-voltage direct current systems require rectifiers (devices that convert alternating current to direct current) which represent a new content opportunity. Solid-state transformers, which offer benefits including compact size, efficiency gains, and superior integration with battery storage systems, represent another emerging opportunity. Racks themselves become physically larger and more technically complex to accommodate higher power densities, creating incremental content. Most significantly, cooling infrastructure becomes more critical and more technically sophisticated as power density increases. Liquid-cooling solutions, which can more efficiently manage the thermal output of high-density AI workloads, may represent expanding opportunities even as traditional air-cooling margins compress.

The market opportunity breakdown

Understanding the datacenter equipment opportunity requires granular analysis of component costs. A theoretical 400-megawatt facility carries an estimated total cost of approximately $11 billion. Approximately 64 percent reflects information technology equipment (servers and processors), 7 percent represents the physical building structure, and 29 percent comprises electrical and cooling infrastructure, the addressable market for equipment suppliers.

The $220 billion annual addressable market translates into specific opportunities per facility type. Electrical equipment companies have sized their revenue opportunity in artificial intelligence datacenters at approximately $3-$3.5 million per megawatt, compared to approximately $1.2 million per megawatt in traditional non-AI facilities. This differential reflects both increased complexity and increased redundancy requirements in AI-focused facilities.

"Big Tech messaging continues to be very bullish about the need for AI-related spend and their confidence to raise capital allocation there."

Mark Fielding, Head of European Industrials Research, RBC Capital Markets

Datacenter buildout as the primary growth driver

The scale of datacenter buildout is reshaping the growth trajectories of industrial equipment suppliers. Historical analysis reveals a strong correlation between datacenter exposure and organic growth rates. Providers with the highest datacenter revenue exposure have demonstrated 5-year organic growth averaging approximately 18 percent, compared to single-digit growth for less-exposed suppliers. This pattern has intensified in recent years; the correlation between datacenter exposure and growth is substantially stronger in the recent 5-year period than in the preceding 10-year period, indicating accelerating datacenter importance.

This growth concentration is mathematically significant. If a major diversified equipment supplier applies growth expectations from its most datacenter-focused competitors (27-29 percent organic growth) to its own datacenter revenue segment, it implies that the remainder of the business need grow only approximately 2 percent to achieve overall guidance. For some companies with lower datacenter exposure percentages, the mathematics is even more extreme; portions of the business would need to shrink while overall growth targets remain achievable, provided datacenter segments grow in line with industry leaders.

This dynamic explains why the fastest-growing equipment suppliers are nearly invariably those with the highest datacenter exposure. The few exceptions involve suppliers with exceptional positioning in specific high-growth subsegments (such as liquid cooling solutions) or those benefiting from grid infrastructure trends that parallel datacenter buildout.

Exhibit 32 is titled "RBC Cloud Capex Tracker – Major Hyperscale Service Providers" and displays a filled area chart measuring total hyperscale investments in billions of US dollars (y-axis, ranging from $0 to $900bn) across calendar years on the x-axis (CY2018 through CY2027E). The data series is labeled "Total Capex" and rendered in dark navy blue. Spending levels were relatively modest and gradual through the early part of the period: approximately $100bn in CY2018, rising incrementally to roughly $110bn in CY2019, ~$140bn in CY2020, ~$180bn in CY2021, and plateauing near $200bn in CY2022 and CY2023A (actual). A pronounced inflection begins in CY2024, with investment rising sharply to approximately $270bn, followed by an accelerating trajectory to roughly $480bn in CY2025. Estimates project further steep growth to approximately $790bn in CY2026E and approximately $840bn in CY2027E, representing a roughly 8x increase from the CY2018 baseline. Source: S&P Capital IQ, RBC Capital Markets. Data as of March 12, 2026.

Sustained capital allocation from technology leaders

Perhaps the most compelling aspect of the datacenter equipment opportunity is the explicit commitment from hyperscale technology operators to sustained infrastructure investment. Recent earnings calls and industry commentary reveal a qualitative shift in executive sentiment compared to historical patterns. Where previous cycles generated uncertainty about the timeline to profitability for artificial intelligence infrastructure, current commentary reflects conviction about artificial intelligence economics and competitive necessity around infrastructure capacity.

During Q4 2025 results, one major technology platform noted that "demands for compute resources across the company have increased even faster than our supply" and flagged expectations for "significantly more capacity" in 2026 while still anticipating constraint through much of the year until additional facilities reach production. Another reported expanding 2026 capital expenditure guidance to a $175-185 billion range "with investments ramping over the course of the year," emphasizing that "investments made in artificial intelligence are already translating into strong performance."

These represent not discretionary expenditures but competitive necessities. Availability of computing capacity itself is constraining business performance, not speculative future returns. This creates a structural backdrop in which the equipment supply chain faces sustained demand pulls rather than inventory build cycles vulnerable to demand fluctuations, distinct from prior cycles where infrastructure investment represented anticipated future capacity deployed ahead of demand.

Mark Fielding authored "RBC Imagine ™: Building AI - Datacenter equipment growth drivers remain strong," published on March 20, 2026. For more information on the full report, please contact your RBC representative.